So, to help avoid deadlocks you can consider a couple of different strategies: Setting autocommit=True will avoid the deadlocks because each individual SQL statement will be automatically committed, thus ending the transaction (which was automatically started when that statement began executing) and releasing any locks on the updated rows. If those processes use long-lived transactions that generate a large number of such locks then the chances of a deadlock are greater.

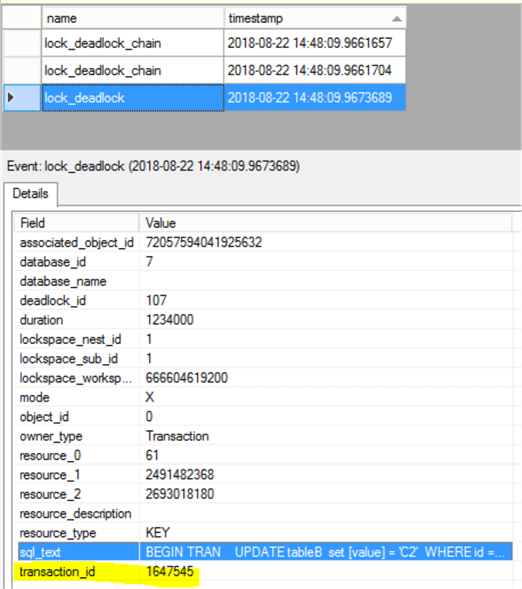

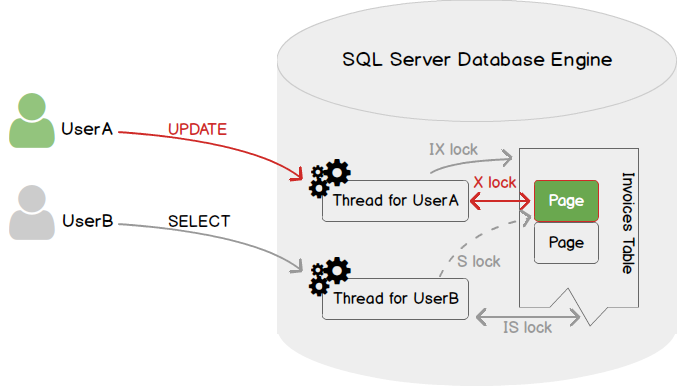

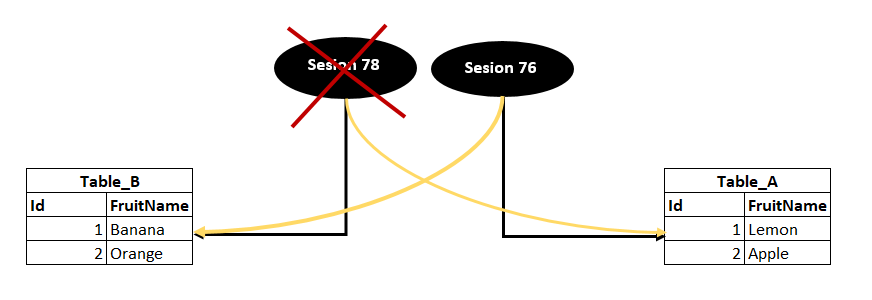

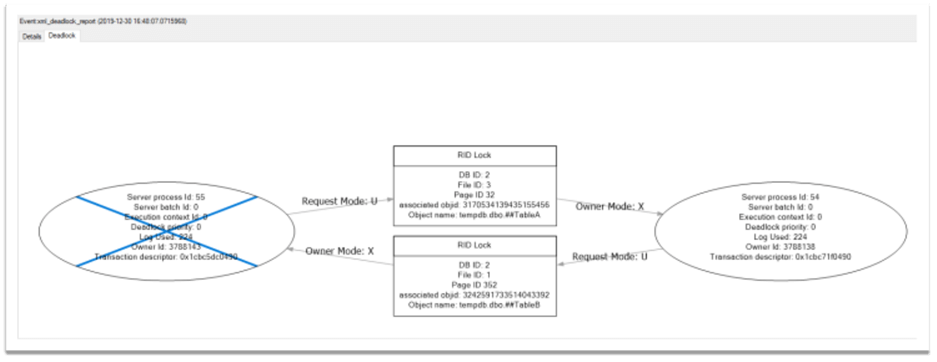

Under conditions of high concurrency, deadlocks can occur if multiple processes generate conflicting locks. Unless the database is configured to support SNAPSHOT isolation by default, a write operation within a transaction under Read Committed isolation will place transaction-scoped locks on the rows that were updated. The default transaction isolation level in SQL Server is "Read Committed".

Therefore when the first SQL statement is executed, ODBC begins a database transaction that remains in effect until the Python code does a. When establishing a connection, pyodbc defaults to autocommit=False in accordance with the Python DB-API spec. Setting autocommit=True in the dbargs seems to prevent the deadlock errors, even with the multiple curs.executes. If I can trust this, then my main problem is how to get some output for logging.ĮDIT Setting autocommit=True in the dbargs seems to prevent the deadlock errors, even with the multiple curs.executes. I see that both rows were inserted into the table tableX, even a subsequent curs.fetchall() fails with the "Previous SQL was not a query." error, so it seems that pyodbc execute does execute everything.not just the first statement. Nevertheless, if I try something like curs.execute('INSERT INTO testX (entid, thecol) VALUES (4, 5) INSERT INTO testX (entid, thecol) VALUES (5, 6) SELECT * FROM testX ' Nevertheless, except for the inability to fetch my my real "big query", consisting of multiple selects, updates, inserts actually works and populates multiple tables in the db. ProgrammingError Traceback (most recent call last) So if the first statement is not a query you get that error: In : curs.execute("PRINT 'HELLO' SELECT 'BYE' ") Previous SQL was not a query.Įxperiments within the shell suggest pyodbc ignores everything after the first statement: In : curs.execute("SELECT 'HELLO' SELECT 'BYE' ") I tried declaring a variable and recording various things to it (did we have to insert or update the hotel for example) before finally SELECT as outputstring, but doing a fetch after the execute in pyodbc always fails with : No results. Nevertheless, it seems impossible to get any output if I do a big command like this. As I understand pyodbc is suppose to only handle single statements, however it does seem to work, and I see my tables populates with no deadlock errors. However, I don't really understand why this makes a difference, and also I'm not entirely sure it is safe to run big SQL blocks with multiple SELECTS, INSERTS, UPDATES in a single pyodbc curs.execute. If instead of upserting the data in the above manner, I generate one big SQL command, which does the same thing (checks for hotel, upserts it, records the id to a temporary variable, for each room checks if exists and upserts against the hotel id var etc), then do only a single curs.execute(.) in the python code, then I no longer see deadlock errors. It is a bit more complicated than this in practice, but this illustrates that the Python code is using multiple curs.executes before and during the loop. These upserts are done using `curs.execute(.)`. If not, then insert it using the hotel id to reference the hotels table row.Įlse update it. In the rooms table (which is foreign keyed to the hotels table). This is done by `curs.execute(.)`Ģ) Python loop over the hotel rooms scraped. The code that actually does the insert/update schematically looks like this: 1) Check if hotel exists in hotels table, if it does update it, else insert it new. Was deadlocked on lock resources with another process and has beenĬhosen as the deadlock victim.

I am encountering deadlock errors like: [Failure instance: Traceback: : There can be multiple celery threads and multiple servers running this code and simultaneously writing to the db different items. The data items consist of some basic hotel data (name, address, rating.) and some list of rooms with associated data(price, occupancy etc). I have some code that writes Scrapy scraped data to a SQL server db.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed